Soti MobiControl mit Linux-Unterstützung

Soti kündigt auf der Hausmesse Soti Sync in Niagara Falls eine Unterstützung für Linux-Geräte in der EMM-Lösung Soti MobiControl 14 an.

Quelle: Heise Tech News

Soti kündigt auf der Hausmesse Soti Sync in Niagara Falls eine Unterstützung für Linux-Geräte in der EMM-Lösung Soti MobiControl 14 an.

Quelle: Heise Tech News

Microsoft will seine neue Office-Suite für Windows Ende 2018 auf den Markt bringen. Sie trägt die Bezeichnung Office 2019 und soll offenbar weiter verkauft und nicht nur als Abo im Rahmen von Office 365 angeboten werden. Zuvor hat es Berichte gegeben, Microsoft wolle komplett auf ein Abomodell umsteigen. (Microsoft, Instant Messenger)

Quelle: Golem

Im Januar 2016 als Project Toscana angekündigt, ist Watson Workspace seit der Watson World im Oktober 2016 im Preview Status. Brauchte man bisher eine Einladung, so kann sich nun jedermann kostenlos anmelden.

Quelle: Heise Tech News

Die Stadt München beginnt im Oktober mit der Umstellung auf eine neue Groupware, zunächst wird das Mailsystem renoviert. Offenbar kommt aber nicht der Open-Source-Ausschreibungsgewinner Kolab zum Einsatz, sondern Microsoft Exchange.

Quelle: Heise Tech News

Apple hat am Dienstagabend iOS 11.0.1 veröffentlicht – nur sieben Tage nach der ersten offiziellen Version von iOS 11.

Quelle: Heise Tech News

Trump in Cleveland in July 2016.

Chip Somodevilla / Getty Images

The new 280 character limit isn't for everyone. In a blog post, Twitter wrote that “we want to try it out with a small group of people before we make a decision to launch to everyone.”

But Biz Stone, a co-founder of Twitter, tweeted Tuesday evening that Trump is not in the 280 character test group. A Twitter spokesperson later confirmed to BuzzFeed News that Trump was not included, explaining that the test group was selected at random.

Case in point, Trump has used Twitter this week to sustain his growing feud with NFL players who kneel during the national anthem before games. Since entering politics and winning the president, Trump has similarly used Twitter to lash out at a dizzying array enemies in both politics and popular culture.

His tweets regularly rack up thousands of retweets and end up screen shotted and shared on cable news for days.

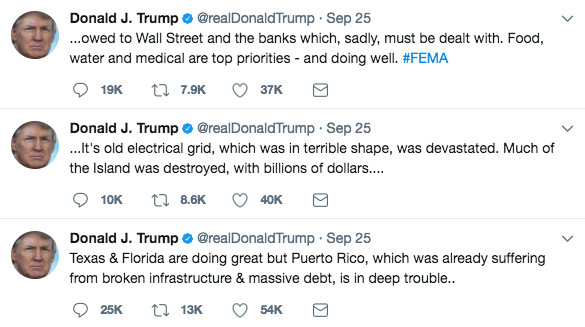

Twitter / Via Twitter: @realDonaldTrump

On Monday, for example, Trump weighed in on the ongoing crisis in Puerto Rico, as the island grapples with the aftermath of Hurricane Maria. The president's tweets drew some criticism, however, for focusing on Wall Street and the electrical grid.

Trump has also tweeted about the escalating situation in North Korea, referring to dictator Kim Jong Un as “Little Rocket Man” and warning “they won't be around much longer!”

North Korea's foreign minister responded by saying that the tweet amounted to a declaration of war. Asked for comment on Trump's tweet — specifically if it

violates the company's terms of service — a Twitter spokesperson told

BuzzFeed News it “does not comment on individual accounts for privacy

and security reasons.”

It's still unclear if the 280 character test will be expanded to all Twitter users — including Trump. So for now, the president will have to keep posting unthreaded tweetstorms about world leaders, natural disasters, and TV ratings.

LINK: Twitter Tests Doubling Its Character Limit To 280

LINK: Twitter Might Increase The Character Limit To 280 And People Responded With 2: “NO”

Quelle: <a href="Donald Trump Doesn't Have Access To Twitter's New 280 Character Limit“>BuzzFeed

Editor’s note: today’s post is by Janet Kuo and Kenneth Owens, Software Engineers at Google.GoogleThis post talks about recent updates to the DaemonSet and StatefulSet API objects for Kubernetes. We explore these features using Apache ZooKeeper and Apache Kafka StatefulSets and a Prometheus node exporter DaemonSet.In Kubernetes 1.6, we added the RollingUpdate update strategy to the DaemonSet API Object. Configuring your DaemonSets with the RollingUpdate strategy causes the DaemonSet controller to perform automated rolling updates to the Pods in your DaemonSets when their spec.template are updated. In Kubernetes 1.7, we enhanced the DaemonSet controller to track a history of revisions to the PodTemplateSpecs of DaemonSets. This allows the DaemonSet controller to roll back an update. We also added the RollingUpdate strategy to the StatefulSet API Object, and implemented revision history tracking for the StatefulSet controller. Additionally, we added the Parallel pod management policy to support stateful applications that require Pods with unique identities but not ordered Pod creation and termination.StatefulSet rolling update and Pod management policyFirst, we’re going to demonstrate how to use StatefulSet rolling updates and Pod management policies by deploying a ZooKeeper ensemble and a Kafka cluster.PrerequisitesTo follow along, you’ll need to set up a Kubernetes 1.7 cluster with at least 3 schedulable nodes. Each node needs 1 CPU and 2 GiB of memory available. You will also need either a dynamic provisioner to allow the StatefulSet controller to provision 6 persistent volumes (PVs) with 10 GiB each, or you will need to manually provision the PVs prior to deploying the ZooKeeper ensemble or deploying the Kafka cluster.Deploying a ZooKeeper ensembleApache ZooKeeper is a strongly consistent, distributed system used by other distributed systems for cluster coordination and configuration management. Note: You can create a ZooKeeper ensemble using this zookeeper_mini.yaml manifest. You can learn more about running a ZooKeeper ensemble on Kubernetes here, as well as a more in-depth explanation of the manifest and its contents.When you apply the manifest, you will see output like the following.$ kubectl apply -f zookeeper_mini.yaml service “zk-hs” createdservice “zk-cs” createdpoddisruptionbudget “zk-pdb” createdstatefulset “zk” createdThe manifest creates an ensemble of three ZooKeeper servers using a StatefulSet, zk; a Headless Service, zk-hs, to control the domain of the ensemble; a Service, zk-cs, that clients can use to connect to the ready ZooKeeper instances; and a PodDisruptionBugdet, zk-pdb, that allows for one planned disruption. (Note that while this ensemble is suitable for demonstration purposes, it isn’t sized correctly for production use.)If you use kubectl get to watch Pod creation in another terminal you will see that, in contrast to the OrderedReady strategy (the default policy that implements the full version of the StatefulSet guarantees), all of the Pods in the zk StatefulSet are created in parallel. $ kubectl get po -lapp=zk -wNAME READY STATUS RESTARTS AGEzk-0 0/1 Pending 0 0szk-0 0/1 Pending 0 0szk-1 0/1 Pending 0 0szk-1 0/1 Pending 0 0szk-0 0/1 ContainerCreating 0 0szk-2 0/1 Pending 0 0szk-1 0/1 ContainerCreating 0 0szk-2 0/1 Pending 0 0szk-2 0/1 ContainerCreating 0 0szk-0 0/1 Running 0 10szk-2 0/1 Running 0 11szk-1 0/1 Running 0 19szk-0 1/1 Running 0 20szk-1 1/1 Running 0 30szk-2 1/1 Running 0 30sThis is because the zookeeper_mini.yaml manifest sets the podManagementPolicy of the StatefulSet to Parallel.apiVersion: apps/v1beta1kind: StatefulSetmetadata: name: zkspec: serviceName: zk-hs replicas: 3 updateStrategy: type: RollingUpdate podManagementPolicy: Parallel …Many distributed systems, like ZooKeeper, do not require ordered creation and termination for their processes. You can use the Parallel Pod management policy to accelerate the creation and deletion of StatefulSets that manage these systems. Note that, when Parallel Pod management is used, the StatefulSet controller will not block when it fails to create a Pod. Ordered, sequential Pod creation and termination is performed when a StatefulSet’s podManagementPolicy is set to OrderedReady.Deploying a Kafka ClusterApache Kafka is a popular distributed streaming platform. Kafka producers write data to partitioned topics which are stored, with a configurable replication factor, on a cluster of brokers. Consumers consume the produced data from the partitions stored on the brokers. Note: Details of the manifests contents can be found here. You can learn more about running a Kafka cluster on Kubernetes here. To create a cluster, you only need to download and apply the kafka_mini.yaml manifest. When you apply the manifest, you will see output like the following:$ kubectl apply -f kafka_mini.yaml service “kafka-hs” createdpoddisruptionbudget “kafka-pdb” createdstatefulset “kafka” createdThe manifest creates a three broker cluster using the kafka StatefulSet, a Headless Service, kafka-hs, to control the domain of the brokers; and a PodDisruptionBudget, kafka-pdb, that allows for one planned disruption. The brokers are configured to use the ZooKeeper ensemble we created above by connecting through the zk-cs Service. As with the ZooKeeper ensemble deployed above, this Kafka cluster is fine for demonstration purposes, but it’s probably not sized correctly for production use.If you watch Pod creation, you will notice that, like the ZooKeeper ensemble created above, the Kafka cluster uses the Parallel podManagementPolicy.$ kubectl get po -lapp=kafka -wNAME READY STATUS RESTARTS AGEkafka-0 0/1 Pending 0 0skafka-0 0/1 Pending 0 0skafka-1 0/1 Pending 0 0skafka-1 0/1 Pending 0 0skafka-2 0/1 Pending 0 0skafka-0 0/1 ContainerCreating 0 0skafka-2 0/1 Pending 0 0skafka-1 0/1 ContainerCreating 0 0skafka-1 0/1 Running 0 11skafka-0 0/1 Running 0 19skafka-1 1/1 Running 0 23skafka-0 1/1 Running 0 32sProducing and consuming dataYou can use kubectl run to execute the kafka-topics.sh script to create a topic named test.$ kubectl run -ti –image=gcr.io/google_containers/kubernetes-kafka:1.0-10.2.1 createtopic –restart=Never –rm — kafka-topics.sh –create > –topic test > –zookeeper zk-cs.default.svc.cluster.local:2181 > –partitions 1 > –replication-factor 3Now you can use kubectl run to execute the kafka-console-consumer.sh command to listen for messages.$ kubectl run -ti –image=gcr.io/google_containers/kubnetes-kafka:1.0-10.2.1 consume –restart=Never –rm — kafka-console-consumer.sh –topic test –bootstrap-server kafka-0.kafka-hs.default.svc.cluster.local:9093In another terminal, you can run the kafka-console-producer.sh command. $kubectl run -ti –image=gcr.io/google_containers/kubernetes-kafka:1.0-10.2.1 produce –restart=Never –rm > — kafka-console-producer.sh –topic test –broker-list kafka-0.kafka-hs.default.svc.cluster.local:9093,kafka-1.kafka-hs.default.svc.cluster.local:9093,kafka-2.kafka-hs.default.svc.cluster.local:9093Output from the second terminal appears in the first terminal. If you continue to produce and consume messages while updating the cluster, you will notice that no messages are lost. You may see error messages as the leader for the partition changes when individual brokers are updated, but the client retries until the message is committed. This is due to the ordered, sequential nature of StatefulSet rolling updates which we will explore further in the next section.Updating the Kafka clusterStatefulSet updates are like DaemonSet updates in that they are both configured by setting the spec.updateStrategy of the corresponding API object. When the update strategy is set to OnDelete, the respective controllers will only create new Pods when a Pod in the StatefulSet or DaemonSet has been deleted. When the update strategy is set to RollingUpdate, the controllers will delete and recreate Pods when a modification is made to the spec.template field of a DaemonSet or StatefulSet. You can use rolling updates to change the configuration (via environment variables or command line parameters), resource requests, resource limits, container images, labels, and/or annotations of the Pods in a StatefulSet or DaemonSet. Note that all updates are destructive, always requiring that each Pod in the DaemonSet or StatefulSet be destroyed and recreated. StatefulSet rolling updates differ from DaemonSet rolling updates in that Pod termination and creation is ordered and sequential.You can patch the kafka StatefulSet to reduce the CPU resource request to 250m.$ kubectl patch sts kafka –type=’json’ -p='[{“op”: “replace”, “path”: “/spec/template/spec/containers/0/resources/requests/cpu”, “value”:”250m”}]’statefulset “kafka” patchedIf you watch the status of the Pods in the StatefulSet, you will see that each Pod is deleted and recreated in reverse ordinal order (starting with the Pod with the largest ordinal and progressing to the smallest). The controller waits for each updated Pod to be running and ready before updating the subsequent Pod.$kubectl get po -lapp=kafka -wNAME READY STATUS RESTARTS AGEkafka-0 1/1 Running 0 13mkafka-1 1/1 Running 0 13mkafka-2 1/1 Running 0 13mkafka-2 1/1 Terminating 0 14mkafka-2 0/1 Terminating 0 14mkafka-2 0/1 Terminating 0 14mkafka-2 0/1 Terminating 0 14mkafka-2 0/1 Pending 0 0skafka-2 0/1 Pending 0 0skafka-2 0/1 ContainerCreating 0 0skafka-2 0/1 Running 0 10skafka-2 1/1 Running 0 21skafka-1 1/1 Terminating 0 14mkafka-1 0/1 Terminating 0 14mkafka-1 0/1 Terminating 0 14mkafka-1 0/1 Terminating 0 14mkafka-1 0/1 Pending 0 0skafka-1 0/1 Pending 0 0skafka-1 0/1 ContainerCreating 0 0skafka-1 0/1 Running 0 11skafka-1 1/1 Running 0 21skafka-0 1/1 Terminating 0 14mkafka-0 0/1 Terminating 0 14mkafka-0 0/1 Terminating 0 14mkafka-0 0/1 Terminating 0 14mkafka-0 0/1 Pending 0 0skafka-0 0/1 Pending 0 0skafka-0 0/1 ContainerCreating 0 0skafka-0 0/1 Running 0 10skafka-0 1/1 Running 0 22sNote that unplanned disruptions will not lead to unintentional updates during the update process. That is, the StatefulSet controller will always recreate the Pod at the correct version to ensure the ordering of the update is preserved. If a Pod is deleted, and if it has already been updated, it will be created from the updated version of the StatefulSet’s spec.template. If the Pod has not already been updated, it will be created from the previous version of the StatefulSet’s spec.template. We will explore this further in the following sections.Staging an updateDepending on how your organization handles deployments and configuration modifications, you may want or need to stage updates to a StatefulSet prior to allowing the roll out to progress. You can accomplish this by setting a partition for the RollingUpdate. When the StatefulSet controller detects a partition in the updateStrategy of a StatefulSet, it will only apply the updated version of the StatefulSet’s spec.template to Pods whose ordinal is greater than or equal to the value of the partition.You can patch the kafka StatefulSet to add a partition to the RollingUpdate update strategy. If you set the partition to a number greater than or equal to the StatefulSet’s spec.replicas (as below), any subsequent updates you perform to the StatefulSet’s spec.template will be staged for roll out, but the StatefulSet controller will not start a rolling update.$ kubectl patch sts kafka -p ‘{“spec”:{“updateStrategy”:{“type”:”RollingUpdate”,”rollingUpdate”:{“partition”:3}}}}’statefulset “kafka” patchedIf you patch the StatefulSet to set the requested CPU to 0.3, you will notice that none of the Pods are updated.$ kubectl patch sts kafka –type=’json’ -p='[{“op”: “replace”, “path”: “/spec/template/spec/containers/0/resources/requests/cpu”, “value”:”0.3″}]’statefulset “kafka” patchedEven if you delete a Pod and wait for the StatefulSet controller to recreate it, you will notice that the Pod is recreated with current CPU request.$ kubectl delete po kafka-1pod “kafka-1″ deleted$ kubectl get po kafka-1 -wNAME READY STATUS RESTARTS AGEkafka-1 0/1 ContainerCreating 0 10skafka-1 0/1 Running 0 19skafka-1 1/1 Running 0 21s$ kubectl get po kafka-1 -o yamlapiVersion: v1kind: Podmetadata: … resources: requests: cpu: 250m memory: 1GiRolling out a canaryOften, we want to verify an image update or configuration change on a single instance of an application before rolling it out globally. If you modify the partition created above to be 2, the StatefulSet controller will roll out a canary that can be used to verify that the update is working as intended.$ kubectl patch sts kafka -p ‘{“spec”:{“updateStrategy”:{“type”:”RollingUpdate”,”rollingUpdate”:{“partition”:2}}}}’statefulset “kafka” patchedYou can watch the StatefulSet controller update the kafka-2 Pod and pause after the update is complete.$ kubectl get po -lapp=kafka -wNAME READY STATUS RESTARTS AGEkafka-0 1/1 Running 0 50mkafka-1 1/1 Running 0 10mkafka-2 1/1 Running 0 29skafka-2 1/1 Terminating 0 34skafka-2 0/1 Terminating 0 38skafka-2 0/1 Terminating 0 39skafka-2 0/1 Terminating 0 39skafka-2 0/1 Pending 0 0skafka-2 0/1 Pending 0 0skafka-2 0/1 Terminating 0 20skafka-2 0/1 Terminating 0 20skafka-2 0/1 Pending 0 0skafka-2 0/1 Pending 0 0skafka-2 0/1 ContainerCreating 0 0skafka-2 0/1 Running 0 19skafka-2 1/1 Running 0 22sPhased roll outsSimilar to rolling out a canary, you can roll out updates based on a phased progression (e.g. linear, geometric, or exponential roll outs).If you patch the kafka StatefulSet to set the partition to 1, the StatefulSet controller updates one more broker. $ kubectl patch sts kafka -p ‘{“spec”:{“updateStrategy”:{“type”:”RollingUpdate”,”rollingUpdate”:{“partition”:1}}}}’statefulset “kafka” patchedIf you set it to 0, the StatefulSet controller updates the final broker and completes the update.$ kubectl patch sts kafka -p ‘{“spec”:{“updateStrategy”:{“type”:”RollingUpdate”,”rollingUpdate”:{“partition”:0}}}}’statefulset “kafka” patchedNote that you don’t have to decrement the partition by one. For a larger StatefulSet–for example, one with 100 replicas–you might use a progression more like 100, 99, 90, 50, 0. In this case, you would stage your update, deploy a canary, roll out to 10 instances, update fifty percent of the Pods, and then complete the update.Cleaning upTo delete the API Objects created above, you can use kubectl delete on the two manifests you used to create the ZooKeeper ensemble and the Kafka cluster.$ kubectl delete -f kafka_mini.yaml service “kafka-hs” deletedpoddisruptionbudget “kafka-pdb” deletedStatefulset “kafka” deleted$ kubectl delete -f zookeeper_mini.yaml service “zk-hs” deletedservice “zk-cs” deletedpoddisruptionbudget “zk-pdb” deletedstatefulset “zk” deletedBy design, the StatefulSet controller does not delete any persistent volume claims (PVCs): the PVCs created for the ZooKeeper ensemble and the Kafka cluster must be manually deleted. Depending on the storage reclamation policy of your cluster, you many also need to manually delete the backing PVs.DaemonSet rolling update, history, and rollbackIn this section, we’re going to show you how to perform a rolling update on a DaemonSet, look at its history, and then perform a rollback after a bad rollout. We will use a DaemonSet to deploy a Prometheus node exporter on each Kubernetes node in the cluster. These node exporters export node metrics to the Prometheus monitoring system. For the sake of simplicity, we’ve omitted the installation of the Prometheus server and the service for communication with DaemonSet pods from this blogpost. PrerequisitesTo follow along with this section of the blog, you need a working Kubernetes 1.7 cluster and kubectl version 1.7 or later. If you followed along with the first section, you can use the same cluster.DaemonSet rolling update: Prometheus node exportersFirst, prepare the node exporter DaemonSet manifest to run a v0.13 Prometheus node exporter on every node in the cluster:$ cat >> node-exporter-v0.13.yaml <<EOFapiVersion: extensions/v1beta1kind: DaemonSetmetadata: name: node-exporterspec: updateStrategy: type: RollingUpdate template: metadata: labels: app: node-exporter name: node-exporter spec: containers: – image: prom/node-exporter:v0.13.0 name: node-exporter ports: – containerPort: 9100 hostPort: 9100 name: scrape hostNetwork: true hostPID: trueEOFNote that you need to enable the DaemonSet rolling update feature by explicitly setting DaemonSet .spec.updateStrategy.type to RollingUpdate.Apply the manifest to create the node exporter DaemonSet:$ kubectl apply -f node-exporter-v0.13.yaml –recorddaemonset “node-exporter” createdWait for the first DaemonSet rollout to complete:$ kubectl rollout status ds node-exporterdaemon set “node-exporter” successfully rolled outYou should see each of your node runs one copy of the node exporter pod:$ kubectl get pods -l app=node-exporter -o wideTo perform a rolling update on the node exporter DaemonSet, prepare a manifest that includes the v0.14 Prometheus node exporter:$ cat node-exporter-v0.13.yaml | sed “s/v0.13.0/v0.14.0/g” > node-exporter-v0.14.yamlThen apply the v0.14 node exporter DaemonSet:$ kubectl apply -f node-exporter-v0.14.yaml –recorddaemonset “node-exporter” configuredWait for the DaemonSet rolling update to complete:$ kubectl rollout status ds node-exporter …Waiting for rollout to finish: 3 out of 4 new pods have been updated…Waiting for rollout to finish: 3 of 4 updated pods are available…daemon set “node-exporter” successfully rolled outWe just triggered a DaemonSet rolling update by updating the DaemonSet template. By default, one old DaemonSet pod will be killed and one new DaemonSet pod will be created at a time. Now we’ll cause a rollout to fail by updating the image to an invalid value:$ cat node-exporter-v0.13.yaml | sed “s/v0.13.0/bad/g” > node-exporter-bad.yaml$ kubectl apply -f node-exporter-bad.yaml –recorddaemonset “node-exporter” configuredNotice that the rollout never finishes:$ kubectl rollout status ds node-exporter Waiting for rollout to finish: 0 out of 4 new pods have been updated…Waiting for rollout to finish: 1 out of 4 new pods have been updated…# Use ^C to exitThis behavior is expected. We mentioned earlier that a DaemonSet rolling update kills and creates one pod at a time. Because the new pod never becomes available, the rollout is halted, preventing the invalid specification from propagating to more than one node. StatefulSet rolling updates implement the same behavior with respect to failed deployments. Unsuccessful updates are blocked until it corrected via roll back or by rolling forward with a specification.$ kubectl get pods -l app=node-exporter NAME READY STATUS RESTARTS AGEnode-exporter-f2n14 0/1 ErrImagePull 0 3m…# N = number of nodes$ kubectl get ds node-exporterNAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGEnode-exporter N N N-1 1 N <none> 46mDaemonSet history, rollbacks, and rolling forwardNext, perform a rollback. Take a look at the node exporter DaemonSet rollout history:$ kubectl rollout history ds node-exporter daemonsets “node-exporter”REVISION CHANGE-CAUSE1 kubectl apply –filename=node-exporter-v0.13.yaml –record=true2 kubectl apply –filename=node-exporter-v0.14.yaml –record=true3 kubectl apply –filename=node-exporter-bad.yaml –record=trueCheck the details of the revision you want to roll back to:$ kubectl rollout history ds node-exporter –revision=2daemonsets “node-exporter” with revision #2Pod Template: Labels: app=node-exporter Containers: node-exporter: Image: prom/node-exporter:v0.14.0 Port: 9100/TCP Environment: <none> Mounts: <none> Volumes: <none>You can quickly roll back to any DaemonSet revision you found through kubectl rollout history:# Roll back to the last revision$ kubectl rollout undo ds node-exporter daemonset “node-exporter” rolled back# Or use –to-revision to roll back to a specific revision$ kubectl rollout undo ds node-exporter –to-revision=2daemonset “node-exporter” rolled backA DaemonSet rollback is done by rolling forward. Therefore, after the rollback, DaemonSet revision 2 becomes revision 4 (current revision):$ kubectl rollout history ds node-exporter daemonsets “node-exporter”REVISION CHANGE-CAUSE1 kubectl apply –filename=node-exporter-v0.13.yaml –record=true3 kubectl apply –filename=node-exporter-bad.yaml –record=true4 kubectl apply –filename=node-exporter-v0.14.yaml –record=trueThe node exporter DaemonSet is now healthy again:$ kubectl rollout status ds node-exporterdaemon set “node-exporter” successfully rolled out# N = number of nodes$ kubectl get ds node-exporter NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGEnode-exporter N N N N N <none> 46mIf current DaemonSet revision is specified while performing a rollback, the rollback is skipped: $ kubectl rollout undo ds node-exporter –to-revision=4daemonset “node-exporter” skipped rollback (current template already matches revision 4)You will see this complaint from kubectl if the DaemonSet revision is not found:$ kubectl rollout undo ds node-exporter –to-revision=10error: unable to find specified revision 10 in historyNote that kubectl rollout history and kubectl rollout status support StatefulSets, too! Cleaning up$ kubectl delete ds node-exporterWhat’s next for DaemonSet and StatefulSetRolling updates and roll backs close an important feature gap for DaemonSets and StatefulSets. As we plan for Kubernetes 1.8, we want to continue to focus on advancing the core controllers to GA. This likely means that some advanced feature requests (e.g. automatic roll back, infant mortality detection) will be deferred in favor of ensuring the consistency, usability, and stability of the core controllers. We welcome feedback and contributions, so please feel free to reach out on Slack, to ask questions on Stack Overflow, or open issues or pull requests on GitHub.Post questions (or answer questions) on Stack OverflowJoin the community portal for advocates on K8sPortFollow us on Twitter @Kubernetesio for latest updatesConnect with the community on SlackGet involved with the Kubernetes project on GitHub

Quelle: kubernetes

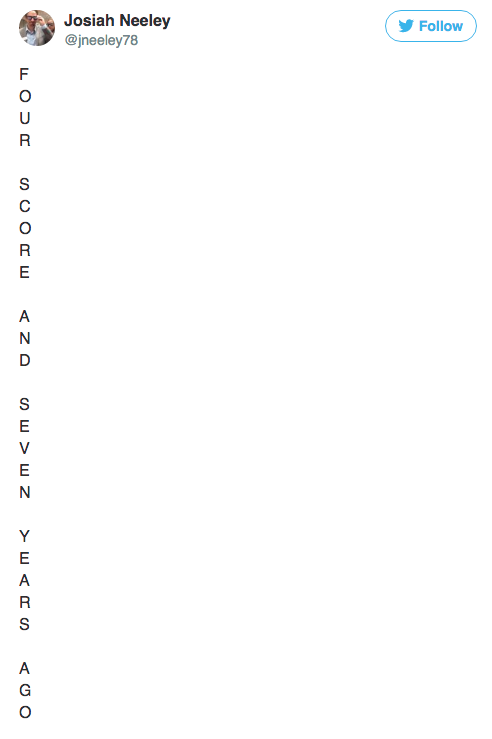

Twitter / Via Twitter: @jneeley78

Are you worried about living in a world where every tweet is that long? Then let me offer you some words of advice, sent from the future — or at least from a place where tweets have always been this long: Japan.

Thanks to the way the Japanese language works, you can sometimes say an entire word with a single character. Think of it like how you can say “pizza” with one single emoji. Even if it's not always one word per character, you can definitely say a whole lot more in 140 than you can in English.

Take, for example, this tweet by Misato Nagoya, a lifestyle writer for BuzzFeed Japan:

It's exactly 140 characters, because we've mastered the art of writing things exactly 140 characters long in Japanese too. But here's how you'd translate it to English:

I don't want to go on a trip abroad, don't want a cute pet, don't want an expensive car, don't want fashionable clothes. Not at all. All I want is just to live in a comfortable home, cook by myself, and eat what I wanna eat. That's all. But it is not easy to make these tiny dreams come true. My life sucks.

That tweet is pretty depressing — trust me, Misato is fun in real life! — but it's also 307 characters long. And it's totally normal for Japanese Twitter.

Here's another one from Misato:

Again, exactly 140. (We're good at this.) In English:

If I have baby, I would love to raise with lots of love. BUT after real moms told me how hard it is, I think it is almost impossible for me. Too few kindergartens in the city to find a place to leave my kid. The difficulty of keeping a work-life balance. The high costs of raising kid including education. I am already overwhelmed doing things just for me. How could I take care of my child too? So all I can and should do now is to support moms who are raising children.

That's 471 characters!

But this is just how Twitter is in Japan, and we love it just as much as you do in America. And we have just as many hilarious memes, weird Twitter subcultures, and massive cultural moments based on tweets as you guys do.

Twitter even seems to recognize that we don't need any more characters here — while they are testing the new 280-character option around the world, they are not offering it in China, South Korea or Japan, because we've all been living in the long-tweet future since Day 1.

Don't worry — it's fine here in the long-tweet future. You'll love it, or it least you'll only hate it as much as you hate Twitter already.

Quelle: <a href="Longer Tweets Are Fine. I Know, Because We've Had Them In Japan Forever.“>BuzzFeed

Quelle: aws.amazon.com

AWS CloudFormation now allows you to protect a stack from being accidently deleted. You can enable termination protection on a stack when you create it. If you attempt to delete a stack with termination protection enabled, the deletion fails and the stack, including its status, will remain unchanged. To delete a stack you need to first disable termination protection.

Quelle: aws.amazon.com